What Changes in CX When AI Handles First Response

HAPP AI Team

Customer Success

· 12 min read

Over the past five years, customer communication has undergone a structural transformation—although for many companies it has happened almost unnoticed.

In 2018–2019, AI in customer support mostly meant scripted chatbots that handled FAQs and offloaded the first line. In 2023–2025 the picture changed fundamentally. Thanks to large language models (LLMs) and agentic orchestration systems, AI stopped being a tool for deflecting requests and became a full first point of contact. Today in many enterprises it is AI that interacts with the customer first; often this is implemented via a chat assistant.

This is not a cosmetic change. Handing the first response to AI transforms three basic parameters of customer communication: response latency, answer quality, and escalation logic. Together these factors directly affect conversion, revenue, and long-term customer value.

Response latency drops sharply and that changes customer behaviour

Response time has always mattered. But in the AI era the bar has moved. Before widespread AI adoption, customers were willing to wait minutes or even hours. In live chat, average first response time in enterprise often ranged from 2 to 8 minutes at peak. Email could take 6–24 hours.

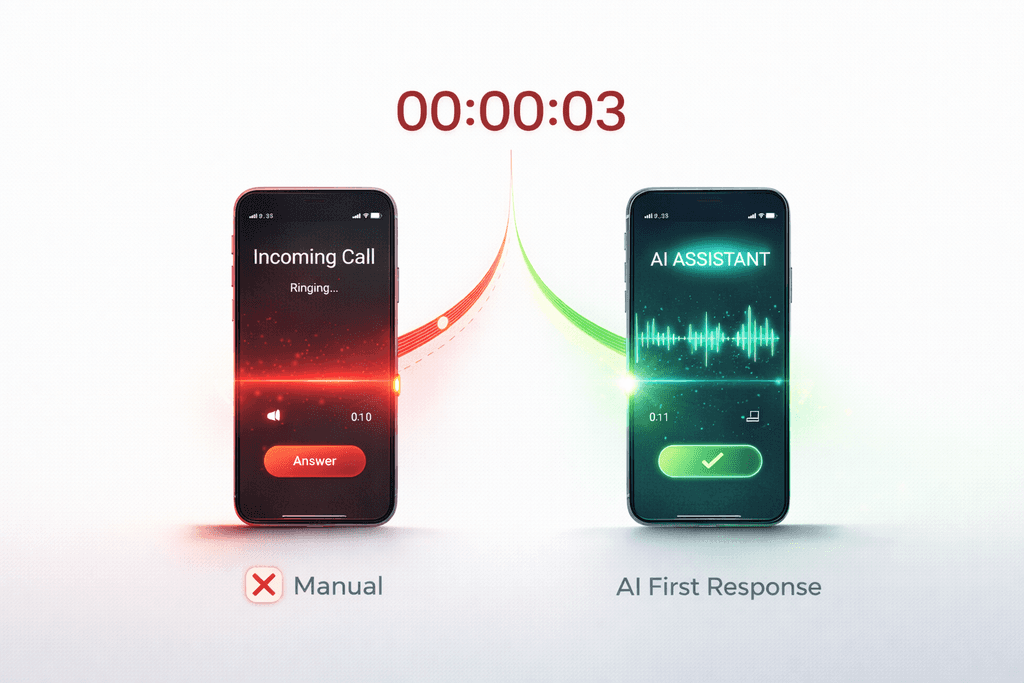

When AI gives the first response, latency drops to seconds. That is not just optimization—it is a change in behavioural model.

In 2022 Shopify reported that merchants using AI assistants during peak sales cut average first response time from several minutes to under 10 seconds. The effect showed up not only in satisfaction metrics but in conversion: a fast answer at the moment of doubt during checkout reduced abandoned carts. A similar effect was seen at H&M in 2021–2022 after scaling an AI assistant in digital channels.

Response latency directly affects perceived brand reliability. An instant reaction reduces "cognitive drift"—the moment when the customer loses focus and abandons the intent to buy. AI-first response effectively removes that window of distraction. For e‑commerce and service companies this is no longer just a support metric. It is a revenue factor.

When AI gives the first response, latency drops to seconds—that changes customer behaviour and directly affects conversion.

Answer quality becomes systemic, not individual

In the human model, quality depends on the specific agent, their experience, training, workload, and even fatigue. When AI shapes the first response, variability shrinks.

A telling example is Morgan Stanley: an internal GPT‑4-based AI assistant, integrated with over 100,000 internal documents via a retrieval architecture, let advisors get structured, contextually relevant answers instantly. In customer communication a similar approach ensures consistent policies, standardized explanations on pricing and returns, and alignment with current data. Quality becomes a function of the system, not of the individual. For regulated industries such structure minimizes regulatory risk.

Escalation becomes architectural, not situational

In the traditional model the agent decides when to hand the request to a human based on their own judgment. In an AI-first model escalation is built into the system: confidence scoring, intent detection, complexity classification. Escalation stops being a reaction—it becomes a design choice.

American Express scaled AI in support over 2020–2023: most standard requests were automated, but high-risk or financially sensitive ones were automatically passed to live agents. For the COO this raises governance questions: at what confidence does the model hand off to a human, which transaction types are never handled by AI, which customer segments are human-first.

Conversion—the hidden KPI

Most companies evaluate AI-first response through the lens of cost reduction. The main effect often shows up in conversion. Three mechanisms: handling doubt at checkout (an instant answer on size, delivery, or guarantees can prevent cart abandonment—well-tuned AI systems can recover 15–30% of abandoned carts); speed of response to B2B leads (a reply within the first 5 minutes significantly increases lead qualification likelihood); post-purchase confidence (instant answers reduce returns and improve retention).

Lower latency + structured quality + controlled escalation = revenue stability; conversion often turns out to be the main KPI of AI-first communication.

Side effect: team redesign

AI-first communication does not remove people; it changes their role. Routine requests disappear; complex, emotional, or financially significant cases stay with humans. Deeper training for agents is needed, and the need for observability of AI decisions and handoff points grows.

Evolution 2018–2025

2018–2019: scripted chatbots for FAQ. 2020–2021: pandemic-driven acceleration of digital support. 2022: scaling LLMs for support and knowledge retrieval. 2023–2024: AI becomes the first tier of response in e‑commerce and financial services. 2025: AI-first communication becomes the expected standard in high-volume digital business. The experimentation phase is over.

What has fundamentally changed

The AI-first model shifts customer communication from "human queue → variable quality → reactive escalation" to "instant system → structured knowledge → designed escalation." That changes perceived service speed, conversion likelihood, cost structure, team model, and level of control. Communication stops being only a cost centre and becomes an operational layer directly tied to revenue.

The strategic question for 2026

The question is no longer whether AI should give the first response. The real question for leaders is: is your first line of communication designed deliberately—or did it emerge by default? When AI becomes the "front door" for the customer, it stops being a support tool. It becomes infrastructure. Customer communication becomes conversion architecture.

Need a consultation?

We’ll show how HAPP fits your business.