No AI Strategy? You Have API Dependency

HAPP AI Team

Product

· 10 min read

In 2023, integrating generative AI meant experiments. In 2024—rolling out copilots into workflows. In 2025—mass adoption of external LLM APIs for CRM, customer support, analytics, document workflows, and operations.

By early 2026 a structural fact is clear: many firms that claim an “AI strategy” have instead built their processes on dependence on a handful of external model providers.

That is not a strategy for autonomy. It is infrastructure dependency—and that difference defines your level of control.

From cloud dependency to model dependency

Corporate IT has already lived through concentration risk. In 2010–2020 the public cloud market consolidated around three hyperscalers—Amazon Web Services, Microsoft Azure, and Google Cloud. By 2025, according to Synergy Research Group, those three controlled roughly two-thirds of the global cloud infrastructure market.

Companies responded with multi-cloud strategies, redundancy, and sovereign architectures. Even then, migrating between clouds took years.

AI has gone through consolidation much faster.

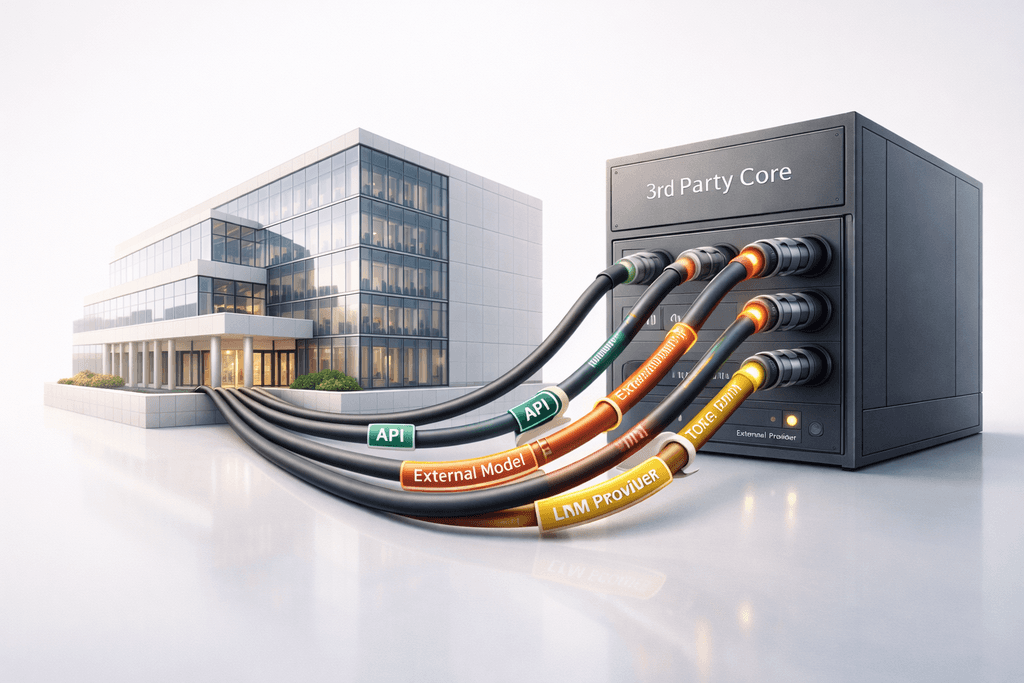

Per IDC (AI Infrastructure Survey 2025), over 70% of mid-size and large companies using generative AI rely primarily on third-party API models rather than in-house or self-hosted solutions. That means the cognitive core of their processes—reasoning and decision logic—is effectively outside the organization.

Over 70% of companies using generative AI rely on third-party API models. The cognitive core of business processes sits outside the organization.

Cloud providers sell compute. Model providers sell inference and reasoning. The latter is far harder to replace.

Training frontier models in 2025 required billions in capital. Training GPT-4 alone, by independent estimates, cost over $100M in compute. Gemini demanded comparable infrastructure. Even fine-tuning or deploying open-weight models in production needs GPU clusters and MLOps teams most companies do not have.

Dependency is shifting from infrastructure to cognition.

Price volatility as an operational risk

API dependency has measurable financial impact.

In late 2025 several LLM providers revised pricing for high-volume clients. Officially this was framed as resource optimization. In practice, some enterprises saw cost increases of 20–35% in certain scenarios—especially long-context and multilingual use cases.

For companies using AI in voice communication, token volume is huge. A contact center with 500,000 calls per month, where each interaction includes transcription, contextual reasoning, and response generation, can consume tens of millions of tokens per day. A small tariff change can mean six- or seven-figure annual swings.

SaaS pricing is often fixed by contract. LLM APIs stay dynamic—rate limits, context windows, and premium features change.

This is not just budget instability. It is strategic vulnerability.

Latency and degradation: risk at the customer edge

Beyond cost, there is performance risk.

In January 2026 some European enterprises reported temporary latency spikes when calling external LLM APIs at peak times. These were not full outages, but delays above ~1.5 seconds were enough to hurt interaction quality.

In voice AI systems, natural conversation requires latency under 300–500 ms. Delays over a second feel like a technical break. In an industry discussion in February 2026, a large European e-commerce player reported that an increase from 400 ms to 1.2 s led to a 9–12% drop in session continuation.

When AI mediates customer communication, degradation means lost revenue.

Most companies do not have a true multi-provider setup with equivalent behavioral guarantees. Switching models means retesting logic, adjusting prompt structures, and re-validating edge cases.

This is not convenience—it is real-time operational dependency.

Behavioral drift of models

Another dimension of risk is behavioral drift.

Providers regularly update models for safety, logic, multilingualism, or instruction stability. Changes are often positive. But even small shifts in response structure or classification confidence can affect downstream automation.

In early 2026 an international retail group that had partly migrated between providers for cost reasons spent over three months adapting prompt logic and re-tuning workflows. Differences in response structure and multilingual intent classification required re-validation of internal datasets.

Models are not fully interchangeable.

In the cloud, business logic stays in-house. In model-dependent systems, part of the reasoning logic is external. That changes the boundaries of control.

Geopolitics and sovereignty of reasoning

AI dependency also has a geopolitical dimension.

In 2025–2026, enforcement of the EU AI Act and localization requirements in Asia-Pacific and the Middle East intensified. For global companies, model choice is now tied to jurisdictional risk.

If a business relies on a single external LLM API operating in a limited set of regions, a change in data-handling rules can force an architectural redesign.

Cloud strategies planned for data residency. AI integrations need to plan for reasoning residency—where and under which legal framework inference runs.

In finance and healthcare this is an audit matter. If a provider changes model architecture without full transparency, the company must re-validate outcomes without access to model weights. That creates an asymmetry of control.

Cloud lock-in vs model lock-in

Cloud dependency is largely about infrastructure tooling. It can be replicated or migrated with enough resources.

Model dependency is different. Frontier models are effectively impossible to replicate in-house without huge investment. Even open-weight deployment in production needs serious compute and MLOps.

In the cloud, data remains the company’s key asset. In a model-dependent setup, part of cognitive capacity sits outside the company. That shifts the balance of power.

What a real AI strategy means in 2026

An AI strategy cannot be “we integrate APIs.” It should include:

- architectural abstraction between orchestration logic and models;

- multi-provider fallback;

- monitoring of behavioral drift;

- clear boundaries for AI-initiated actions;

- scenario planning for price or regulatory change.

Most important is defining what is core to the company’s intellectual value and what is an external service. If AI mediates customer communication, handles contracts, or triggers financial transactions, it becomes part of the execution layer. That layer needs the same resilience as financial or network infrastructure.

Cost increases of 20–35% in some scenarios; a tariff change can mean six- or seven-figure annual swings. LLM APIs stay dynamic.

Conclusion: dependency is not strategy

In 2023–2025, speed of AI adoption created advantage. In 2026 the structure of dependencies is visible.

Cloud dependency reshaped IT over a decade. Model dependency is reshaping control over the business in under three years.

Companies that confuse consuming APIs with strategic capability risk embedding external intelligence at the core of operations without adequate safeguards.

A real AI strategy must define boundaries of control, substitutability, governance, and geopolitical resilience. Otherwise, innovation can be scalable and effective but strategically brittle dependency.

Need a consultation?

We’ll show how HAPP fits your business.